C++ Core Guidelines: More Rules about Performance

In this post, I continue my journey through the rules to performance in the C++ Core Guidelines. I will mainly write about design for optimization.

Here are the two rules for today.

Per.7: Design to enable optimization

When I read this title, I immediately have to think about move semantics. Why? Because you should write your algorithms with move semantics and not with copy semantics. You will automatically get a few benefits.

- Of course, your algorithms use a cheap move instead of an expensive copy.

- Your algorithm is way more stable because it requires no memory, and you will get no std::bad_alloc exception.

- You can use your algorithm with move-only types such as std::unique_ptr.

Understood! Let me implement a generic swap algorithm that uses move semantics.

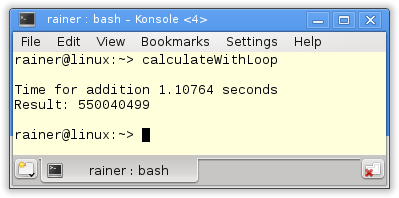

// swap.cpp #include <algorithm> #include <cstddef> #include <iostream> #include <vector> template <typename T> // (3) void swap(T& a, T& b) noexcept { T tmp(std::move(a)); a = std::move(b); b = std::move(tmp); } class BigArray{ public: BigArray(std::size_t sz): size(sz), data(new int[size]){} BigArray(const BigArray& other): size(other.size), data(new int[other.size]){ std::cout << "Copy constructor" << std::endl; std::copy(other.data, other.data + size, data); } BigArray& operator=(const BigArray& other){ // (1) std::cout << "Copy assignment" << std::endl; if (this != &other){ delete [] data; data = nullptr; size = other.size; data = new int[size]; std::copy(other.data, other.data + size, data); } return *this; } ~BigArray(){ delete[] data; } private: std::size_t size; int* data; }; int main(){ std::cout << std::endl; BigArray bigArr1(2011); BigArray bigArr2(2017); swap(bigArr1, bigArr2); // (2) std::cout << std::endl; };

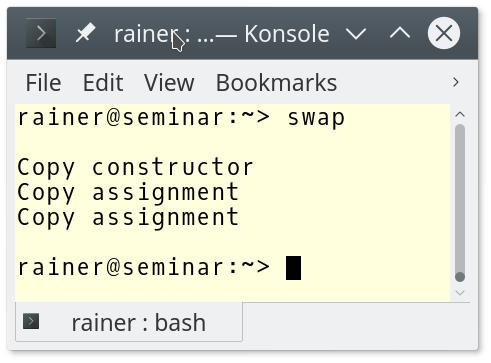

Fine. That was it. No! My coworker gave me his type BigArray. BigArray has a few flaws. I will write about the copy assignment operator (1) later. First of all, I have a more serious concern. BigArray does not support move semantics but only copy semantics. What will happen if I swap the BigArrays in line (2)? My swap algorithm uses move semantic (3) under the hood. Let’s try it out.

Nothing bad will happen. Traditional copy semantics will kick in, and you will get the classical behavior. Copy semantics is a kind of fallback to move semantics. You can see it the other way around. The move is an optimized copy.

Modernes C++ Mentoring

Modernes C++ Mentoring

Do you want to stay informed: Subscribe.

How is that possible? I asked for a move operation in my swap algorithm. The reason is that std::move returns a rvalue. A const lvalue reference can bind to an rvalue, and the copy constructor or a copy assignment operator takes a const lvalue reference. If BigArray had a move constructor or a move assignment operator taking rvalue references, both would have higher priority than the copy pendants.

Implementing your algorithms with move semantic means that move semantic will automatically kick in if your data types support it. If not, copy semantics will be used as a fallback. In the worst case, you will have classical behavior.

I said the copy assignment operator has a few flaws. Here are they:

BigArray& operator=(const BigArray& other){ if (this != &other){ // (1) delete [] data; data = nullptr; size = other.size; data = new int[size]; // (2) std::copy(other.data, other.data + size, data); // (3) } return *this; }

- I have to check for self-assignment. Most of the time, self-assignment will not happen, but I always check for the special case.

- If the allocation fails, this was already modified. The size is wrong, and the data is already deleted. This means the copy constructor only guarantees the basic exception guarantee but not the strong one. The basic exception guarantee states that there is no leak after an exception. The strong exception guarantees that the program can be rolled back to the state in case of an exception. Read the Wikipedia article about exception safety for more details on exception safety.

- The line is identical to the line in the copy constructor.

You can overcome these flaws by implementing your swap function. This is already suggested by the C++ Core Guidelines: C.83: For value-like types, consider providing a noexcept swap function. Here is the new BigArray having a non-member swap function and a copy assignment operator using the swap function.

class BigArray{ public: BigArray(std::size_t sz): size(sz), data(new int[size]){} BigArray(const BigArray& other): size(other.size), data(new int[other.size]){ std::cout << "Copy constructor" << std::endl; std::copy(other.data, other.data + size, data); } BigArray& operator = (BigArray other){ // (2) swap(*this, other); return *this; } ~BigArray(){ delete[] data; } friend void swap(BigArray& first, BigArray& second){ // (1) std::swap(first.size, second.size); std::swap(first.data, second.data); } private: std::size_t size; int* data; };

The swap function inline (1) is not a member; therefore, a call swap(bigArray1, bigArray2) uses it. The signature of the copy assignment operator in line (2) may surprise you. Because of the copy, no self-assignment test is necessary. Additionally, the strong exception guarantee holds, and there is no code duplication. This technique is called the copy-and-swap idiom.

There are a lot of overloaded versions of std::swap available. The C++ standard provides about 50 overloads.

Per.10: Rely on the static type system

This is a kind of meta-rule in C++. Catch errors at compile-time. I can make my explanation of this rule relatively short because I have already written a few articles on this critical topic:

- Use automatic type deduction with auto (automatically initialized) combined with {}-initialization, and you will get many benefits.

- The compiler always knows the right type: auto f = 5.0f.

- You can never forget to initialize a type: auto a; will not work.

- You can verify with {}-initialization that no narrowing conversion will kick in; therefore, you can guarantee that the automatically deduced type is the type you expected: int i = {f}; The compiler will check in this expression that f is, in this case, an int. If not, you will get a warning. This will not happen without braces: int i = f;.

- Check with static_assert and the type-traits library type properties at compile time. If the check fails, you will get a compile-time error: static_assert<std::is_integral<T>::value, “T should be an integral type!”).

- Make type-safe arithmetic with the user-defined literals and the new built-in literals(user-defined literals): auto distancePerWeek= (5 * 120_km + 2 * 1500m – 5 * 400m) / 5;.

- override and final provide guarantees to virtual methods. The compiler checks with override that you overrode a virtual method. The compiler guarantees further with final that you can not override a virtual method that is declared final.

- The New Null Pointer Constant nullptr cleans in C++11 up with the ambiguity of 0 and the macro NULL.

What’s next?

My journey through the rules to performance will go on. In the next post, I will, in particular, write about how to move computation from runtime to compile-time and how you should access memory.

Thanks a lot to my Patreon Supporters: Matt Braun, Roman Postanciuc, Tobias Zindl, G Prvulovic, Reinhold Dröge, Abernitzke, Frank Grimm, Sakib, Broeserl, António Pina, Sergey Agafyin, Андрей Бурмистров, Jake, GS, Lawton Shoemake, Jozo Leko, John Breland, Venkat Nandam, Jose Francisco, Douglas Tinkham, Kuchlong Kuchlong, Robert Blanch, Truels Wissneth, Mario Luoni, Friedrich Huber, lennonli, Pramod Tikare Muralidhara, Peter Ware, Daniel Hufschläger, Alessandro Pezzato, Bob Perry, Satish Vangipuram, Andi Ireland, Richard Ohnemus, Michael Dunsky, Leo Goodstadt, John Wiederhirn, Yacob Cohen-Arazi, Florian Tischler, Robin Furness, Michael Young, Holger Detering, Bernd Mühlhaus, Stephen Kelley, Kyle Dean, Tusar Palauri, Juan Dent, George Liao, Daniel Ceperley, Jon T Hess, Stephen Totten, Wolfgang Fütterer, Matthias Grün, Ben Atakora, Ann Shatoff, Rob North, Bhavith C Achar, Marco Parri Empoli, Philipp Lenk, Charles-Jianye Chen, Keith Jeffery, Matt Godbolt, Honey Sukesan, bruce_lee_wayne, Silviu Ardelean, schnapper79, Seeker, and Sundareswaran Senthilvel.

Thanks, in particular, to Jon Hess, Lakshman, Christian Wittenhorst, Sherhy Pyton, Dendi Suhubdy, Sudhakar Belagurusamy, Richard Sargeant, Rusty Fleming, John Nebel, Mipko, Alicja Kaminska, Slavko Radman, and David Poole.

| My special thanks to Embarcadero |  |

| My special thanks to PVS-Studio |  |

| My special thanks to Tipi.build |  |

| My special thanks to Take Up Code |  |

| My special thanks to SHAVEDYAKS |  |

Modernes C++ GmbH

Modernes C++ Mentoring (English)

Rainer Grimm

Yalovastraße 20

72108 Rottenburg

Mail: schulung@ModernesCpp.de

Mentoring: www.ModernesCpp.org

Leave a Reply

Want to join the discussion?Feel free to contribute!