C++ Core Guidelines: The Remaining Rules about Lock-Free Programming

Today, I will finish my story on concurrency and lock-free programming. There are four rules to lock-free programming in the C++ core guidelines left.

First of all, here are the rules for the current post.

- CP.101: Distrust your hardware/compiler combination

- CP.102: Carefully study the literature

- CP.110: Do not write your own double-checked locking for initialization

- CP.111: Use a conventional pattern if you really need double-checked locking

I must admit I annoyed a few German readers with my two last posts about lock-free programming. My readers got the impression that I wouldn’t say I like lock-free programming. Wrong!. I’m inquisitive about lock-free programming, but before you use it, you have to answer two questions.

- Does lock-free programming solve my performance bottleneck?

- Do I understand lock-free programming well enough to use it?

Before you can not answer these two questions with a big yes, you should continue with the rule CP.102

CP.101: Distrust your hardware/compiler combination

What does that mean: distrust your hardware/compiler combination. Let me put it in another way: When you break the sequential consistency, you will also break your with high probability your intuition. Here is my example:

#include <atomic> #include <iostream> #include <thread> std::atomic<int> x{0}; std::atomic<int> y{0}; void writing(){ x.store(2000); // (1) y.store(11); // (2) } void reading(){ std::cout << y.load() << " "; // (3) std::cout << x.load() << std::endl; // (4) } int main(){ std::thread thread1(writing); std::thread thread2(reading); thread1.join(); thread2.join(); }

I have a question for the short example. Which values für y and x are possible in lines (3) and (4). x and y are atomic, therefore, no data race is possible. I further don’t specify the memory ordering. Therefore, sequential consistency applies. Sequential consistency means:

- Each thread performs its operation in the specified sequence: line (1) happens before line (2), and line (3) happens before line(4).

- There is a global order of all operations on all threads.

If you combine these two properties of sequential consistency, only one combination of x and y not possible: y == 11 and x == 0.

Now, let me break the sequential consistency and maybe your intuition. Here is the weakest of all memory orderings: the relaxed semantics.

#include <atomic> #include <iostream> #include <thread> std::atomic<int> x{0}; std::atomic<int> y{0}; void writing(){ x.store(2000, std::memory_order_relaxed); // (1) y.store(11, std::memory_order_relaxed); // (2) } void reading(){ std::cout << y.load(std::memory_order_relaxed) << " "; // (3) std::cout << x.load(std::memory_order_relaxed) << std::endl; // (4) } int main(){ std::thread thread1(writing); std::thread thread2(reading); thread1.join(); thread2.join(); }

Two unintuitive phenomena can happen. First, thread2 can see the operations of thread1 in a different sequence. Second, thread1 can reorder its instruction because they are not performed on the same atomic. What does that mean for the possible values of x and y: y == 11 and x == 0 is a valid result? I want to be a little bit more specific. Which result is possible depends on your hardware.

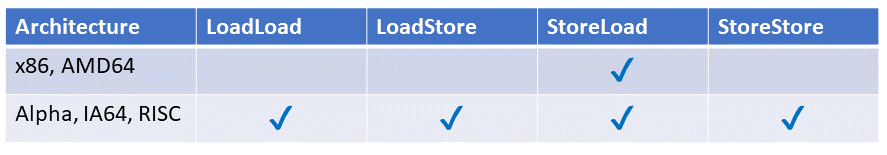

For example, operation recording is quite conservative on x86 or AMD64, stores can be reordered after loads, but on Alpha, IA64, or RISC (ARM) architectures, all four possible reorderings of stores and loads operations are allowed.

Modernes C++ Mentoring

Modernes C++ Mentoring

Do you want to stay informed: Subscribe.

If you don’t believe me, I suggest you read the following rule CP.102.

CP.102: Carefully study the literature

There is not much to add to this rule. At least I can provide links to the literature.

- Anthony Williams: C++ concurrency in action. Manning Publications.

- Boehm, Adve, You Don’t Know Jack About Shared Variables or Memory Models, Communications of the ACM, Feb 2012.

- Boehm, “Threads Basics”, HPL TR 2009-259.

- Adve, Boehm, “Memory Models: A Case for Rethinking Parallel Languages and Hardware”, Communications of the ACM, August 2010.

- Boehm, Adve, “Foundations of the C++ Concurrency Memory Model”, PLDI 08.

- Mark Batty, Scott Owens, Susmit Sarkar, Peter Sewell, and Tjark Weber, “Mathematizing C++ Concurrency”, POPL 2011.

- Damian Dechev, Peter Pirkelbauer, and Bjarne Stroustrup: Understanding and Effectively Preventing the ABA Problem in Descriptor-based Lock-free Designs. 13th IEEE Computer Society ISORC 2010 Symposium. May 2010.

- Damian Dechev and Bjarne Stroustrup: Scalable Non-blocking Concurrent Objects for Mission Critical Code. ACM OOPSLA’09. October 2009

- Damian Dechev, Peter Pirkelbauer, Nicolas Rouquette, and Bjarne Stroustrup: Semantically Enhanced Containers for Concurrent Real-Time Systems. Proc. 16th Annual IEEE International Conference and Workshop on the Engineering of Computer Based Systems (IEEE ECBS). April 2009

CP.110: Do not write your own double-checked locking for initialization, and CP.111: Use a conventional pattern if you really need double-checked locking

I know I should not write about the singleton pattern, but the double-checked locking pattern is infamous for initializing a singleton in a thread-safe way. Here we are:

std::mutex myMutex; class MySingleton{ public: static MySingleton& getInstance(){ std::lock_guard<std::mutex> myLock(myMutex); // (1) if( !instance ) instance= new MySingleton(); return *instance; } private: MySingleton(); ~MySingleton(); MySingleton(const MySingleton&)= delete; MySingleton& operator=(const MySingleton&)= delete; static MySingleton* instance; }; MySingleton::MySingleton()= default; MySingleton::~MySingleton()= default; MySingleton* MySingleton::instance= nullptr;

This singleton pattern implementation is thread-safe because each access to the instance is protected by a std::lock_guard (line (1)). The implementation is correct but to expensive, because a heavy-weight lock guards each reading access of the singleton. Besides the initialization of the singleton, no synchronization is necessary. Here comes the double-checked locking pattern to our rescue.

static MySingleton& getInstance(){ if ( !instance ){ // (1) lock_guard<mutex> myLock(myMutex); // (2) if( !instance ) instance= new MySingleton(); // (3) } return *instance; }

The getInstance method uses an inexpensive pointer comparison in line (1) instead of an expensive lock. Only if the pointer is a nullptr, an expensive lock is used (line (2)). Because there is the possibility that another thread will initialize the singleton between the pointer comparison (line (1)) and the lock (line (2)), an additional pointer comparison in line (3) is necessary. So the name is prominent. Two times a check and one time a lock.

Smart? Yes! Thread-safe? No!

What is the problem? The call instance= new MySingleton() inline (3) consists of at least three steps.

- Allocate memory for MySingleton

- Create the MySingleton object in the memory

- Let instance refer to the MySingleton object

The problem is that there is no guarantee about the sequence of these three steps. For example, the processor can reorder the steps to sequence 1,3, and 2. So, in the first step, the memory will be allocated; in the second step, the instance refers to the singleton. If another thread tries to access the singleton at that time, it compares the pointer and assumes that the singleton is fully initialized.

The consequence is simple: the program has undefined behavior.

I have already written a quite emotionally discussed post to the thread-safe singleton pattern. This included different implementations with std::lock_guard, std::call_once, and std::once_flag, the Meyers singleton, and atomic versions based on the double-checked locking pattern. You can read the details of these implementations and their different performance characteristics on Linux and Windows here: Thread-Safe Initialization of a Singleton.

What’s next?

As I promised, I’m done with the rules of concurrency. The next post is about the rules for error handling in the C++ core guidelines.

Thanks a lot to my Patreon Supporters: Matt Braun, Roman Postanciuc, Tobias Zindl, G Prvulovic, Reinhold Dröge, Abernitzke, Frank Grimm, Sakib, Broeserl, António Pina, Sergey Agafyin, Андрей Бурмистров, Jake, GS, Lawton Shoemake, Jozo Leko, John Breland, Venkat Nandam, Jose Francisco, Douglas Tinkham, Kuchlong Kuchlong, Robert Blanch, Truels Wissneth, Mario Luoni, Friedrich Huber, lennonli, Pramod Tikare Muralidhara, Peter Ware, Daniel Hufschläger, Alessandro Pezzato, Bob Perry, Satish Vangipuram, Andi Ireland, Richard Ohnemus, Michael Dunsky, Leo Goodstadt, John Wiederhirn, Yacob Cohen-Arazi, Florian Tischler, Robin Furness, Michael Young, Holger Detering, Bernd Mühlhaus, Stephen Kelley, Kyle Dean, Tusar Palauri, Juan Dent, George Liao, Daniel Ceperley, Jon T Hess, Stephen Totten, Wolfgang Fütterer, Matthias Grün, Phillip Diekmann, Ben Atakora, Ann Shatoff, Rob North, Bhavith C Achar, Marco Parri Empoli, Philipp Lenk, Charles-Jianye Chen, Keith Jeffery, Matt Godbolt, Honey Sukesan, bruce_lee_wayne, Silviu Ardelean, schnapper79, Seeker, and Sundareswaran Senthilvel.

Thanks, in particular, to Jon Hess, Lakshman, Christian Wittenhorst, Sherhy Pyton, Dendi Suhubdy, Sudhakar Belagurusamy, Richard Sargeant, Rusty Fleming, John Nebel, Mipko, Alicja Kaminska, Slavko Radman, and David Poole.

| My special thanks to Embarcadero |  |

| My special thanks to PVS-Studio |  |

| My special thanks to Tipi.build |  |

| My special thanks to Take Up Code |  |

| My special thanks to SHAVEDYAKS |  |

Modernes C++ GmbH

Modernes C++ Mentoring (English)

Rainer Grimm

Yalovastraße 20

72108 Rottenburg

Mail: schulung@ModernesCpp.de

Mentoring: www.ModernesCpp.org

Leave a Reply

Want to join the discussion?Feel free to contribute!